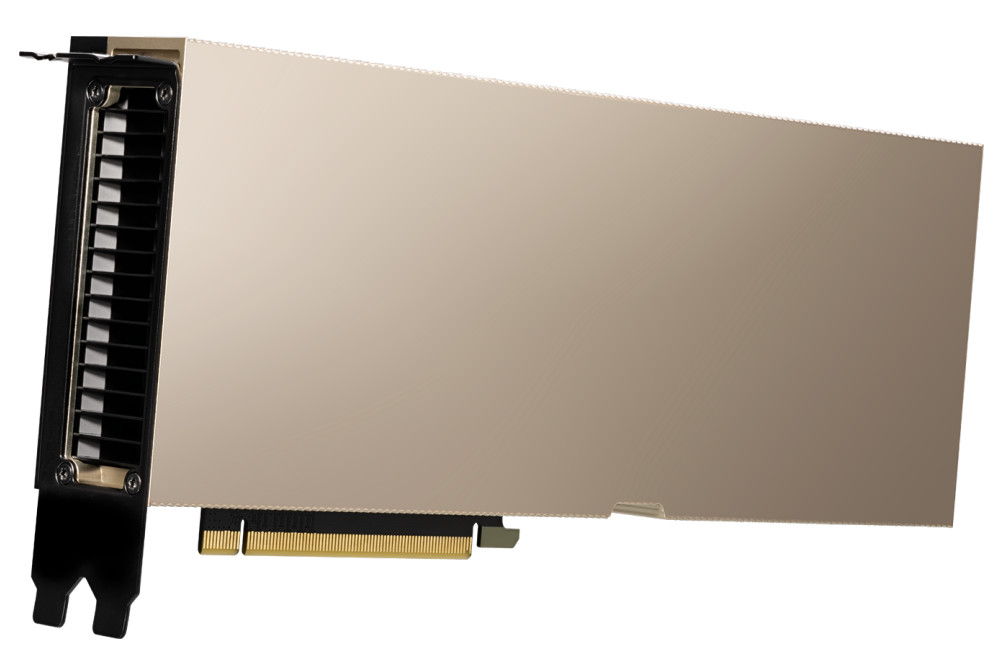

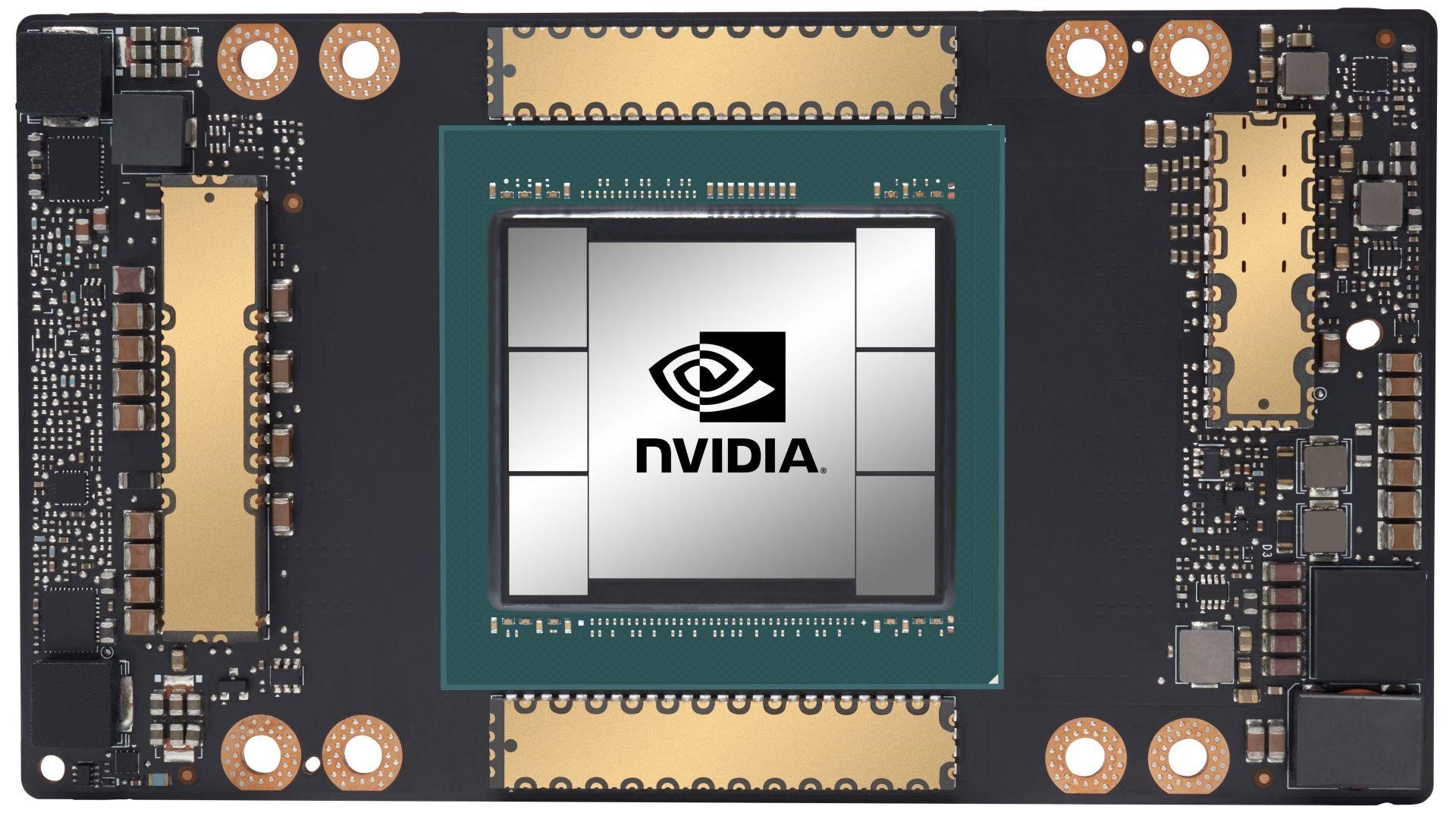

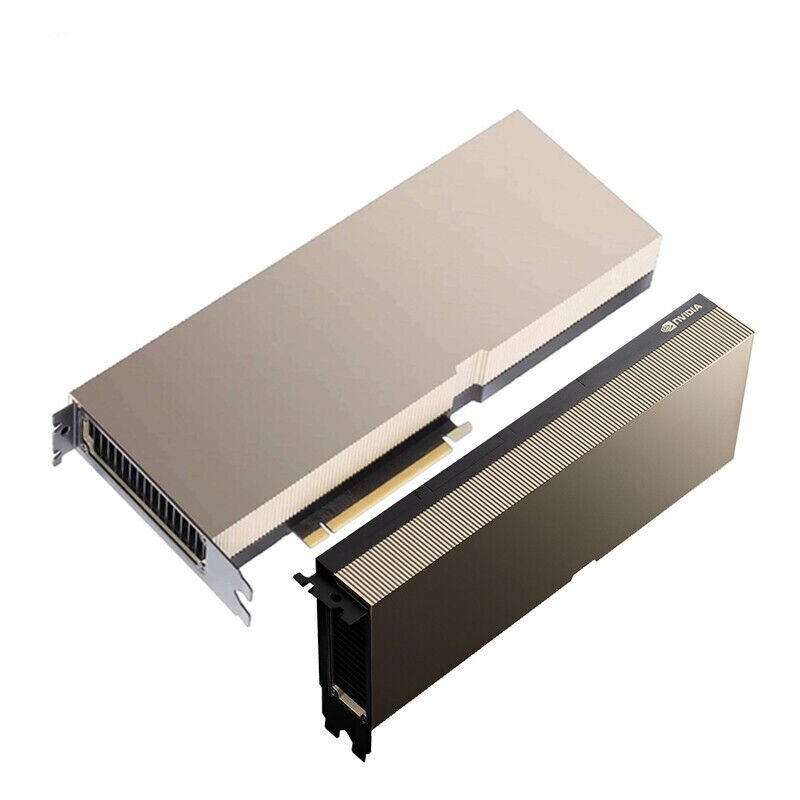

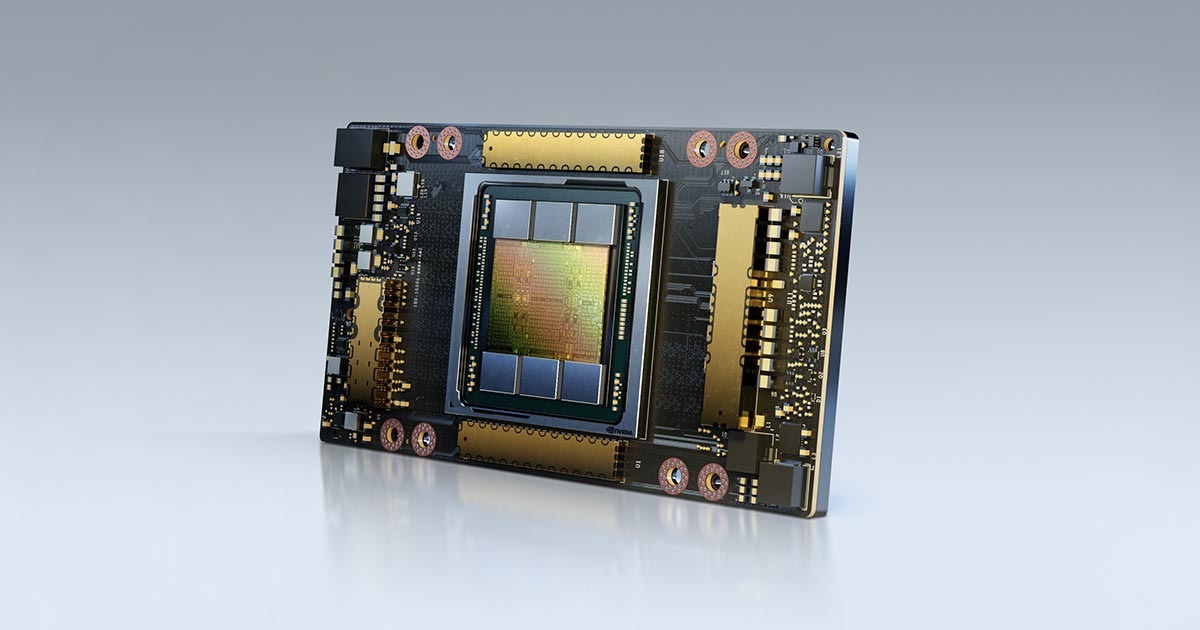

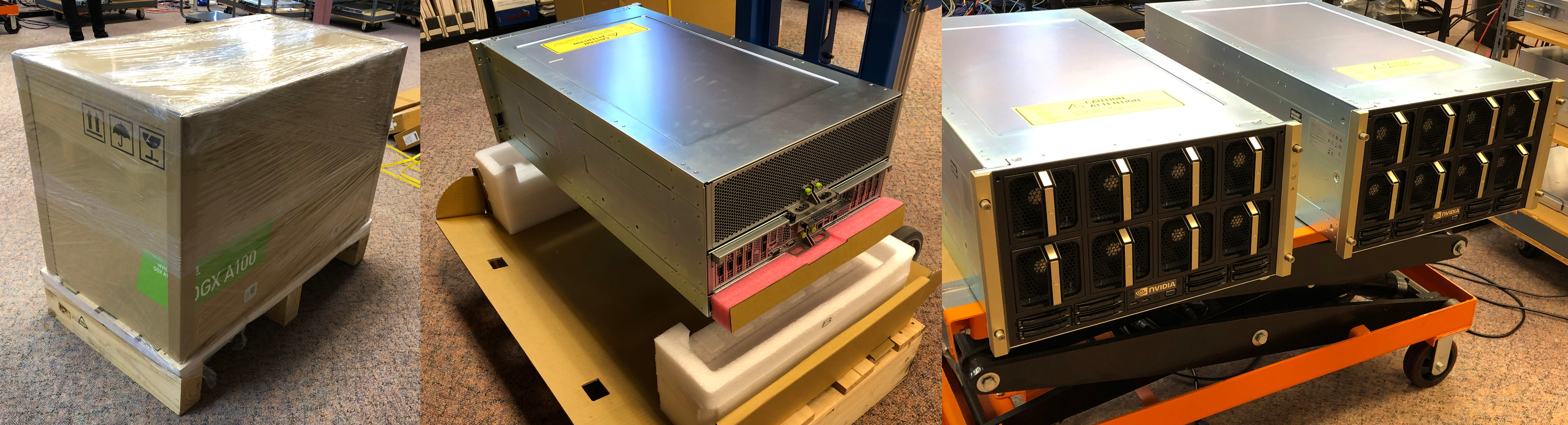

NVIDIA A100 Ampere 40GB CoWoS HBM2 PCIe 4.0 - Passive Cooling - 900-21001-0000-000 - GPU-NVTA100-40 | smicro.eu

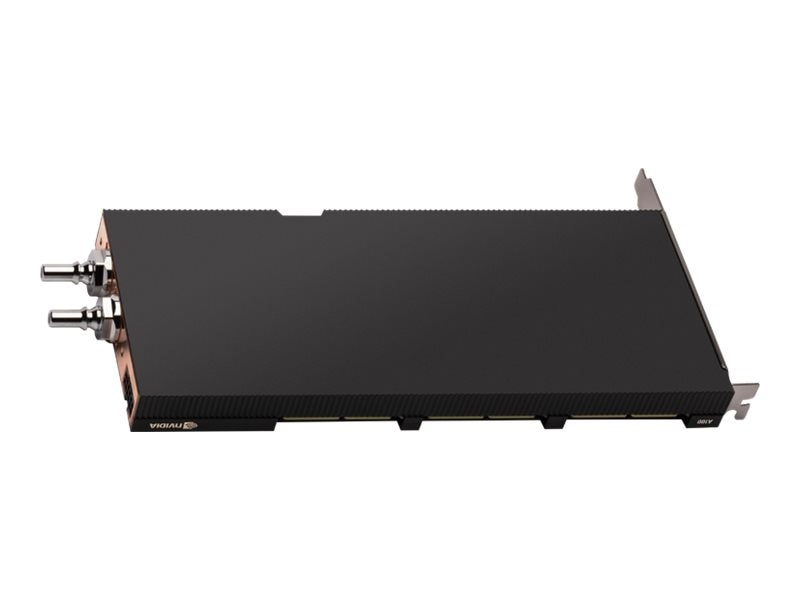

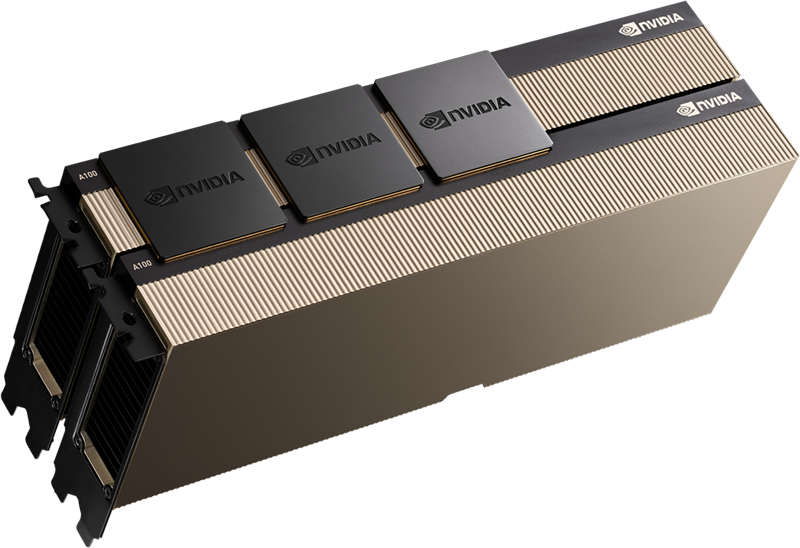

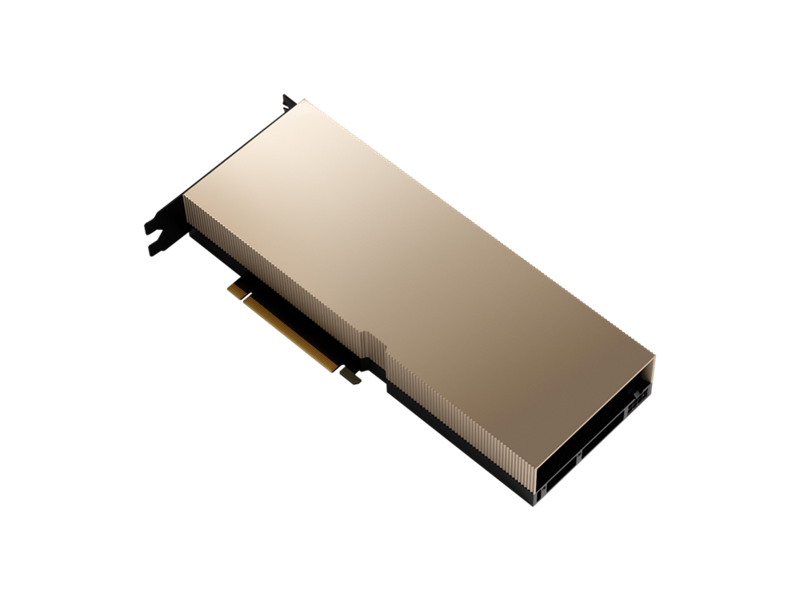

575TK - Refurbished - DELL NVIDIA A100 PCIE AMPERE GPU ACCELERATOR 80GB HBM2E MEMORY INTERFACE 5120 BIT MEMORY BANDWIDTH 1.94TB/S MEMORY BANDWIDTH PCI-E 4.0 X16 GENERAL PURPOSE GRAPHICS PROCESSING UNIT GPGPU

NVIDIA Sets AI Inference Records, Introduces A30 and A10 GPUs for Enterprise Servers | NVIDIA Newsroom